Why Lovable Websites Are Tanking in Google (And How to Fix It)

Quick Answer: Why Do Lovable Sites Struggle with SEO?

Lovable is a brilliant way to build a good-looking website in minutes, but it has a serious Lovable SEO problem. Here's the short version:

Lovable builds client-side rendered React single-page apps. Your pages don't exist as HTML until JavaScript runs in the browser.

Most search engines and AI crawlers don't run JavaScript properly. They see an empty shell instead of your content.

Google can eventually render your pages, but it's unreliable. Indexing is delayed, inconsistent, and often incomplete.

Social platforms and AI tools like ChatGPT, Claude and Perplexity see nothing. Your meta tags and content are invisible to them.

The fixes range from quick wins (meta tags, sitemap, content depth) to structural changes (prerendering or migrating off Lovable).

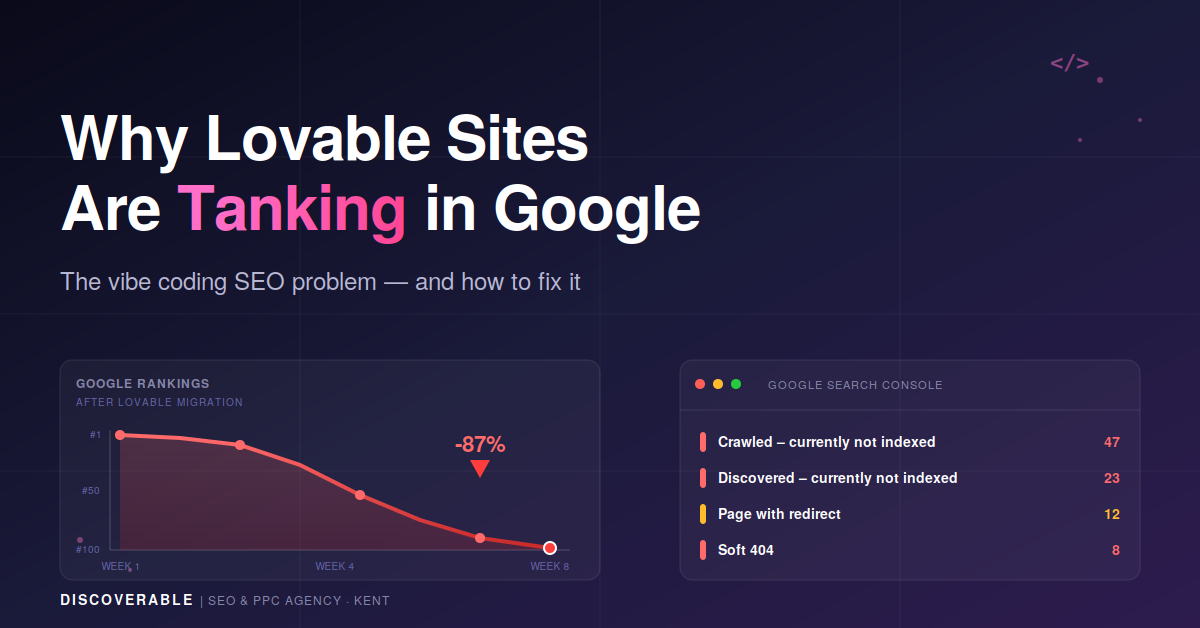

If you've built a site with Lovable and you're watching your rankings drop, or you've never ranked in the first place, you're not alone. We've seen multiple Lovable sites tank in Google over the last few months, and the cause is almost always the same. Here's what's going on and how to fix it.

Think this is happening to your site? We'll run a free 10-minute Lovable indexing check and tell you exactly what Google can and can't see. Request a free check →

1. What's Actually Happening with Lovable SEO

Lovable has exploded in popularity as the poster child of vibe coding - describing what you want in plain English and watching an AI build it for you. It's genuinely impressive. You can go from idea to live website in an afternoon.

The trouble starts a few weeks later, when you check Google Search Console and see messages like "Crawled – currently not indexed" or "Discovered – currently not indexed" on pages that should be ranking. Or your pages are technically indexed, but Google has no idea what they're about, so you rank for nothing.

This isn't a content problem or a backlink problem. It's a technical SEO problem baked into how Lovable builds websites. Before you can fix it, you need to understand what Lovable is actually doing under the hood.

2. Why Lovable Sites Are Built This Way

Lovable's own official documentation spells it out clearly: Lovable builds modern web applications using React and Vite, which means your app is a client-side rendered (CSR) single-page application (SPA).

Let's unpack what that means.

Traditional websites vs Lovable

A traditional website built on WordPress, Squarespace or Webflow sends the browser a fully assembled HTML page for every URL. When Google's crawler visits yoursite.com/about, the server hands over a complete HTML document with the title, meta description, headings and body content already in place. Google reads it, indexes it, done.

A Lovable site works differently. When anyone visits your site - Google, a human, or an AI crawler - the server sends back a nearly empty HTML shell plus a bundle of JavaScript. The browser then runs the JavaScript, which builds the page content and inserts it into the DOM. Every "page" on your site is really just a different state of the same single application.

Why Lovable chose this approach

Single-page apps feel fast for users. Navigation between pages is instant because nothing reloads, it just swaps the visible content. It's also much simpler for an AI like Lovable to generate, because it only needs to produce one React codebase rather than a multi-page architecture with server-side logic.

The trade-off is SEO. And for a lot of Lovable users, that trade-off is being made without them realising it.

3. How Google Actually Handles Lovable Sites

Google can handle JavaScript sites. It does, every day. But the process is more complicated and less reliable than traditional HTML rendering, and that's where Lovable sites run into trouble.

The two-phase indexing problem

According to Google's own JavaScript SEO documentation, Googlebot processes JavaScript sites in two phases:

Phase 1 - Crawl: Googlebot fetches your URL and reads the initial HTML. For a Lovable site, this is effectively empty. There's a <div id="root"></div> and a script tag, and that's about it.

Phase 2 - Render: Googlebot queues your page for rendering by its Web Rendering Service (a headless Chrome), which executes the JavaScript and produces the real content. Only then does Google see what your page actually says.

Google's documentation is clear: "The page may stay on this queue for a few seconds, but it can take longer than that." In practice, that "longer than that" can mean days, weeks, or months for some pages. Research has suggested JavaScript-heavy pages can take up to 9 times longer to index than static HTML.

Flaky indexing: the thing that actually hurts

Even when Google does render your page, the results aren't consistent. This is what the SEO community calls flaky indexing: the same page might be rendered perfectly on one crawl, then come back as an empty shell on the next. Google's own Tom Greenaway confirmed at Google I/O that "the final render can actually arrive several days later".

For you, that means:

Some pages get indexed. Others don't. You can't predict which.

Pages that were ranking can drop out of the index when Google re-crawls and fails to render.

Your content, meta descriptions and structured data become a lottery. Google might see them this week and not next week.

The AI crawler problem

This is the part most people miss. AI crawlers like GPTBot, ClaudeBot and PerplexityBot don't run JavaScript at all. They crawl your site, receive the empty HTML shell, and move on.

If you care about being cited in ChatGPT, recommended in Claude, or appearing in Perplexity answers - and in 2026, you should - a bare Lovable site is completely invisible to these tools. We've covered the growing importance of AI search visibility before, and Lovable's architecture is a serious blocker for it.

4. Where You'll See the Damage First

If your Lovable site is struggling with SEO, here's what you'll typically see, roughly in the order it shows up:

Broken social link previews. When you share your homepage on LinkedIn, X or Slack, the preview is generic or wrong. This is the earliest warning sign, because social platforms never run JavaScript when generating previews.

Missing pages in Google Search Console. You submit a sitemap, and Google reports pages as "Discovered – currently not indexed" for weeks on end.

Inconsistent rankings. A page ranks on Monday, drops on Wednesday, reappears on Friday. Classic flaky indexing.

Zero visibility in AI tools. Ask ChatGPT or Claude about your business and they have no idea you exist, even though your site has been live for months.

Thin content warnings. Google's URL Inspection tool shows your page has "content" but suspiciously little of it, because it captured the shell before JavaScript ran.

If any of this sounds familiar, the good news is that most of these problems have practical fixes.

Recognising these symptoms on your own site?

We'll audit your Lovable build, pinpoint exactly which pages Google is missing, and show you the fastest path to recovery — no fluff, no sales pitch.

Get a free Lovable SEO audit5. 7 Lovable SEO Fixes You Can Apply Right Now

Here are seven practical steps, ordered from easiest to most involved. Work through them in order.

1. Switch to a custom domain immediately

If your site is still on a .lovable.app subdomain, move to a custom domain before doing anything else. A branded domain consolidates all your link equity and gives you SEO investment that isn't tied to a subdomain you don't control. Lovable supports custom domains natively.

2. Sort your meta tags on every route

Every single page needs a unique title and meta description. This is where most Lovable sites fail on day one. Because Lovable generates pages as application states rather than real pages, duplicate titles across routes are depressingly common.

Prompt Lovable with something like:

"Add unique SEO meta tags - title, meta description, canonical URL, Open Graph tags, and Twitter Card tags - to every page route. Use react-helmet-async to manage them per route."

Titles should be under 60 characters, descriptions between 140-160 characters, and every page should have a canonical tag pointing to the correct URL on your custom domain.

3. Build a proper sitemap and robots.txt

Lovable doesn't generate a sitemap automatically. You need to prompt it to create one. Ask for:

A sitemap.xml at the site root, listing every public route with lastmod dates and priorities.

A robots.txt that allows all crawlers, references your sitemap, and explicitly allows AI bots like GPTBot and PerplexityBot if you want AI visibility.

Once both are live, submit the sitemap in Google Search Console and Bing Webmaster Tools.

4. Add structured data (JSON-LD)

Structured data is how you tell Google what your content actually is - a product, an article, an organisation, a list of FAQs. Most Lovable sites have zero schema markup, which is a huge missed opportunity.

At minimum, add:

Organization schema on the homepage, including your name, logo, website URL and social profiles.

BreadcrumbList schema on every inner page.

Product, Article or FAQPage schema where relevant.

Validate everything with Google's Rich Results Test before calling it done.

5. Write real content, not placeholder fluff

Lovable is excellent at generating UI. It is not excellent at generating content that ranks. The default output is usually a hero section, three feature cards with ten words each, and a call-to-action button. Google has nothing to index against real search queries when your page is 90% design and 10% content.

If you want to rank, you need substantive content: proper paragraphs, headings that map to what people actually search for, and supporting pages covering related topics. Our guide on how to improve your website rankings covers the fundamentals of what this looks like in practice.

6. Submit and monitor in Google Search Console

Google Search Console is the only way to see how Google actually sees your Lovable site. Set up a property for your custom domain, verify ownership (DNS TXT record is the cleanest method), and submit your sitemap.

Then use the URL Inspection tool to check individual pages. It will show you exactly what Googlebot receives, whether it rendered successfully, and which resources failed. For Lovable sites specifically, you want to look for:

"Page isn't indexed" warnings.

"Discovered - currently not indexed" status.

Any message about JavaScript resources being blocked or failing to load.

Check this weekly, not monthly.

7. Consider prerendering if SEO is critical

If you've worked through the first six steps and you're still struggling, prerendering is the structural fix. A prerendering service intercepts crawler requests to your site and serves a fully rendered HTML version, while normal users still get the fast, interactive React experience.

Services like Prerender.io and LovableHTML have emerged specifically to solve this problem for Lovable sites. Setup is usually a DNS change, no code rewrites needed.

Prerendering is the difference between relying on Googlebot to render your JavaScript every time (unreliable) and giving it pre-rendered HTML (reliable). Sites that rank consistently from a Lovable foundation are almost always using prerendering behind the scenes.

6. When Lovable Isn't the Right Choice for SEO

Being honest: Lovable is the right choice for plenty of projects. Internal tools, client dashboards, prototypes, apps behind a login - all fine. Small marketing sites where organic search isn't the primary channel can work too, especially with the fixes above applied.

| Platform | How pages render | Out-of-box SEO | AI crawler visibility | Best suited for |

|---|---|---|---|---|

| Lovable | Client-side React SPA | Poor | None without prerendering | Internal tools, prototypes, MVPs, apps behind a login |

| Next.js | Server-side or static | Excellent | Full | Custom builds where SEO and performance both matter |

| Astro | Static with optional hybrid | Excellent | Full | Content-heavy marketing sites and blogs |

| Webflow | Static HTML | Excellent | Full | Design-led brand sites, agencies, startups |

| WordPress | Server-side (PHP) | Excellent | Full | Blogs, content sites, directories, large marketing sites |

| Squarespace | Server-side | Excellent | Full | Small business and creative sites with minimal dev overhead |

| Shopify | Server-side | Excellent | Full | Ecommerce of any size, especially with a large product catalogue |

Lovable isn't the right choice when:

Organic search is your main growth channel.

You're in a competitive vertical where every ranking signal matters (news, finance, legal, ecommerce).

You need to rank hundreds or thousands of pages reliably.

Maximum AI and LLM visibility is important to your strategy.

You're running an ecommerce site with a large product catalogue.

For projects in any of these buckets, we'd usually recommend a properly built website design on a platform with server-side rendering or static generation - Next.js, Astro, or a traditional CMS like WordPress. The build takes longer, but you start ranking rather than fighting to be seen.

For ecommerce businesses in particular, the SEO penalty of a pure Lovable setup usually isn't worth it. Shopify, WooCommerce or a headless setup with proper SSR will serve you far better.

FAQ: Lovable SEO Questions

Is Lovable good for SEO?

Not out of the box. Lovable has the technical features you need - per-page meta tags, sitemaps, structured data support - but because everything renders through JavaScript, crawlers often don't see any of it. With the right fixes (unique meta tags per route, proper sitemap, structured data, substantive content, and ideally prerendering), a Lovable site can rank. Without them, it typically won't.

Why is my Lovable site not showing up on Google?

The most common reason is flaky indexing caused by client-side rendering. Google crawls your page, sees an empty HTML shell, queues it for JavaScript rendering, and either delays rendering for days/weeks or renders it inconsistently. Check Google Search Console's URL Inspection tool to see exactly what Googlebot is receiving.

Can AI search engines like ChatGPT see my Lovable site?

Mostly no, not without prerendering. AI crawlers like GPTBot, ClaudeBot and PerplexityBot don't execute JavaScript, so they see the empty shell Lovable serves. If AI visibility matters to you, prerendering is effectively required.

Will migrating to Lovable tank my existing site's rankings?

There's a real risk, yes. If you're moving from a server-rendered site (like WordPress or Webflow) to a Lovable SPA, you're swapping reliable indexing for flaky indexing. We'd strongly recommend a detailed SEO audit and a prerendering plan before any migration, not after rankings have dropped.

What's the best alternative to Lovable for SEO-heavy projects?

It depends on the project. For content-heavy marketing sites, WordPress, Webflow or Squarespace are hard to beat for out-of-the-box SEO. For more custom builds, Next.js or Astro give you the modern development experience Lovable offers, but with proper server-side rendering. For ecommerce, Shopify or a headless setup are the standard choices.

Does Lovable work for local SEO?

With all the fixes above applied, yes - but the same concerns apply. Local SEO depends heavily on consistent structured data (LocalBusiness schema), accurate NAP information and a Google Business Profile, all of which work regardless of your CMS. The risk is that on a Lovable site, Googlebot might not see your LocalBusiness schema on any given crawl, which can hurt local pack visibility.

How long does it take for a Lovable site to get indexed?

Traditional HTML sites can be indexed within hours. Lovable sites typically take days to weeks, and some pages may never get reliably indexed without prerendering. If your pages have been "Discovered - currently not indexed" for more than a month, the architecture itself is likely the problem, not your content.

Need Help Fixing Your Lovable Site?

If you've built a site with Lovable and it's not performing the way you expected, you don't necessarily need to rebuild from scratch. Most of the issues we've covered here are fixable - some with a few well-placed prompts, others with prerendering or a targeted rebuild of critical pages.

At Discoverable, we help businesses across Kent, London and the South East diagnose and fix technical SEO problems like this every week. Whether you're running an ecommerce site, a B2B lead-gen site, or a local service business, the principles are the same: make sure Google can actually see what you're publishing.

If you want a second opinion on your Lovable site's SEO, or you're weighing up whether to stick with it or migrate to something more SEO-friendly, get in touch and we'll take a look. We can run a full technical audit, prioritise the fixes that will move the needle fastest, and help you avoid the common traps that are costing other Lovable users their rankings.

Fix your Lovable site's SEO - properly.

We've helped UK brands recover indexing, rebuild rankings, and migrate off Lovable when it wasn't the right fit. Whether you need a second opinion or a full done-for-you rebuild, we're ready to help.

Trusted by UK brands · Typically reply within 1 working day